Welcome to the Cumulus Support forum.

Latest Cumulus MX V3 release 3.28.6 (build 3283) - 21 March 2024

Cumulus MX V4 beta test release 4.0.0 (build 4019) - 03 April 2024

Legacy Cumulus 1 release 1.9.4 (build 1099) - 28 November 2014

(a patch is available for 1.9.4 build 1099 that extends the date range of drop-down menus to 2030)

Download the Software (Cumulus MX / Cumulus 1 and other related items) from the Wiki

Latest Cumulus MX V3 release 3.28.6 (build 3283) - 21 March 2024

Cumulus MX V4 beta test release 4.0.0 (build 4019) - 03 April 2024

Legacy Cumulus 1 release 1.9.4 (build 1099) - 28 November 2014

(a patch is available for 1.9.4 build 1099 that extends the date range of drop-down menus to 2030)

Download the Software (Cumulus MX / Cumulus 1 and other related items) from the Wiki

Enhancements for correcting bad historical data and records

Moderator: mcrossley

-

dale

- Posts: 23

- Joined: Tue 29 May 2012 9:15 pm

- Weather Station: Ambient WS-5000

- Operating System: Windows 11

- Location: Glen Arbor, MI

- Contact:

Enhancements for correcting bad historical data and records

I've been using Cumulus 1 since 2002 with two different Oregon Scientific weather stations and am now working on upgrading to a new weather station and to Cumulus MX. I would like to correct bad data from my old data starting in 2002, but, unless I'm missing something, I find that the tools to do so are not practical.

The root cause of the bad data is past failures of sensors reporting bad data and this data getting recorded in the monthly files, one record every 10 minutes. Sometimes the failure would go unnoticed for weeks or months (while I was not onsite) resulting in 1000s of records in the monthly file with bad data, one every 10 minutes. For example, a barometer reading of 18, or 0 (in of Hg) when the lowest every pressure reading on Earth is about 25.6. Other examples in my data are barometer readings too high (above 32), high temperature (above 140), high dew point (158), high rain rate, etc.

It is not practical with the monthly file editor to edit the many errors. I have a few suggestions:

1) Enhance the nice createmissing tool to check for invalid data in the monthly files and ignore it if it is outside of some specified values for each column (or some reasonable predefined values). This should make the regeneration of more accurate dayfile data (by deleting the bad dayfile records and running createmissing). (I realize that data errors that are in bounds cannot be corrected this way and need manual correction--see #3 below)

2) Slightly enhance the records editor tool to recreate the various records files (monthly and all time records): allow the user to click on one of the suggested values on the right to populate the record, rather than having to type the new record in by hand.

3) I think that doing both 1 and 2 would be sufficient to clean up bad data for most practical purposes. It might also be nice to enhance the dayfile and monthly file editing tools to operated on multiple rows (delete multiple) or columns (change a range of values in one column to a specific value). This would allow for easier corrections of bad data that span multiple days or times, although, this can be done with something like excel, as long as you are careful to maintain the date column in the proper format.

The root cause of the bad data is past failures of sensors reporting bad data and this data getting recorded in the monthly files, one record every 10 minutes. Sometimes the failure would go unnoticed for weeks or months (while I was not onsite) resulting in 1000s of records in the monthly file with bad data, one every 10 minutes. For example, a barometer reading of 18, or 0 (in of Hg) when the lowest every pressure reading on Earth is about 25.6. Other examples in my data are barometer readings too high (above 32), high temperature (above 140), high dew point (158), high rain rate, etc.

It is not practical with the monthly file editor to edit the many errors. I have a few suggestions:

1) Enhance the nice createmissing tool to check for invalid data in the monthly files and ignore it if it is outside of some specified values for each column (or some reasonable predefined values). This should make the regeneration of more accurate dayfile data (by deleting the bad dayfile records and running createmissing). (I realize that data errors that are in bounds cannot be corrected this way and need manual correction--see #3 below)

2) Slightly enhance the records editor tool to recreate the various records files (monthly and all time records): allow the user to click on one of the suggested values on the right to populate the record, rather than having to type the new record in by hand.

3) I think that doing both 1 and 2 would be sufficient to clean up bad data for most practical purposes. It might also be nice to enhance the dayfile and monthly file editing tools to operated on multiple rows (delete multiple) or columns (change a range of values in one column to a specific value). This would allow for easier corrections of bad data that span multiple days or times, although, this can be done with something like excel, as long as you are careful to maintain the date column in the proper format.

-

Altocumulus

- Posts: 136

- Joined: Sat 18 May 2013 1:58 pm

- Weather Station: Davis Vantage Pro2 with Solar

- Operating System: Windows 10 Home

- Location: NE Scotland

- Contact:

Re: Enhancements for correcting bad historical data and records

Slightly before Storm Arwen hit NE Scotland I had a couple of glitches in my data which resulted in Cumulus alarming with every single record overwritten by the value 6.8.

I am, therefore, interested in finally moving over to MX ( I have run the two in parallel for some months via VirtualVP ).

I know I have errors in my data, as with the OP, usually from sensor malfunction or excessive and ridiculous rain rates.

When editing, is there an accepted figure one can override the data with ( perhaps an "x" ) which any queries will ignore?

I, therefore, would like to second this suggestion, if it is a possibility.

I am, therefore, interested in finally moving over to MX ( I have run the two in parallel for some months via VirtualVP ).

I know I have errors in my data, as with the OP, usually from sensor malfunction or excessive and ridiculous rain rates.

When editing, is there an accepted figure one can override the data with ( perhaps an "x" ) which any queries will ignore?

I, therefore, would like to second this suggestion, if it is a possibility.

-

Altocumulus

- Posts: 136

- Joined: Sat 18 May 2013 1:58 pm

- Weather Station: Davis Vantage Pro2 with Solar

- Operating System: Windows 10 Home

- Location: NE Scotland

- Contact:

Re: Enhancements for correcting bad historical data and records

I have an opportunity to carry out some serious checking of the dayfile for errors I know exist, -68 temps, 200mm rain rates when no rain occurred. My Davis is being cleaned with a new temperature sensor. I'm also adding on a sun sensor.

The wiki doesn't explain what to do, or at least I haven't found it, with erroneous data - I'd rather find an appropriate replacement in terms of an error entry rather than try and guess a value. Is there a recommendation?

From what I gather I 'just' need to edit the dayfile and not the various records?

The wiki doesn't explain what to do, or at least I haven't found it, with erroneous data - I'd rather find an appropriate replacement in terms of an error entry rather than try and guess a value. Is there a recommendation?

From what I gather I 'just' need to edit the dayfile and not the various records?

- mcrossley

- Posts: 12770

- Joined: Thu 07 Jan 2010 9:44 pm

- Weather Station: Davis VP2/WLL

- Operating System: Bullseye Lite rPi

- Location: Wilmslow, Cheshire, UK

- Contact:

Re: Enhancements for correcting bad historical data and records

Cumulus does not (currently) have the concept of null data for the core data values. So for wind, temp, humidity, rain you have to enter some sort of value.

Once you have the dayfile edited, then you can look at your records.

The all-time and monthly all-time records files have their own log files which show what each new record is and the date/time/value of the record it is replacing. You can use these files to back out invalid records... Or you can use the record editors which will show the values in the record files along with the records it derives from the dayfile (and optionally the monthly log files).

Once you have the dayfile edited, then you can look at your records.

The all-time and monthly all-time records files have their own log files which show what each new record is and the date/time/value of the record it is replacing. You can use these files to back out invalid records... Or you can use the record editors which will show the values in the record files along with the records it derives from the dayfile (and optionally the monthly log files).

-

Altocumulus

- Posts: 136

- Joined: Sat 18 May 2013 1:58 pm

- Weather Station: Davis Vantage Pro2 with Solar

- Operating System: Windows 10 Home

- Location: NE Scotland

- Contact:

Re: Enhancements for correcting bad historical data and records

Thanks Mark,

I had come round to understanding that null or -999 values weren't acceptable.

Once the dayfile has been adjusted, what's the next best step to take?

On MX how long does it take the app to process the files and populate the tables?

Cheers

Geoff

I had come round to understanding that null or -999 values weren't acceptable.

Once the dayfile has been adjusted, what's the next best step to take?

On MX how long does it take the app to process the files and populate the tables?

Cheers

Geoff

- mcrossley

- Posts: 12770

- Joined: Thu 07 Jan 2010 9:44 pm

- Weather Station: Davis VP2/WLL

- Operating System: Bullseye Lite rPi

- Location: Wilmslow, Cheshire, UK

- Contact:

Re: Enhancements for correcting bad historical data and records

Try the records editors, the dayfile will load pretty quickly - couple of seconds, but it depends on the platform.Altocumulus wrote: ↑Wed 01 Dec 2021 7:38 am Once the dayfile has been adjusted, what's the next best step to take?

On MX how long does it take the app to process the files and populate the tables?

It's a bit tedious to go through all the records (the monthly ones in particular), but if you have a spare hour or so...!

-

Altocumulus

- Posts: 136

- Joined: Sat 18 May 2013 1:58 pm

- Weather Station: Davis Vantage Pro2 with Solar

- Operating System: Windows 10 Home

- Location: NE Scotland

- Contact:

-

TheBridge

- Posts: 118

- Joined: Mon 16 Mar 2020 3:23 am

- Weather Station: Davis

- Operating System: Windows 10

- Contact:

Re: Enhancements for correcting bad historical data and records

Hi ALL,

Similar situation here. I had an errant wind speed of 157mph (I am fairly sure this is an error as my house is still standing this morning). I want to change that single value before it propagates into the monthly and annual records files.

Mark: is there maintenance I need to do with MX to avoid this type situation or is just so rare it is just simpler to do manual modifications to datafiles?

Bridge

Similar situation here. I had an errant wind speed of 157mph (I am fairly sure this is an error as my house is still standing this morning). I want to change that single value before it propagates into the monthly and annual records files.

Mark: is there maintenance I need to do with MX to avoid this type situation or is just so rare it is just simpler to do manual modifications to datafiles?

Bridge

-

freddie

- Posts: 2477

- Joined: Wed 08 Jun 2011 11:19 am

- Weather Station: Davis Vantage Pro 2 + Ecowitt

- Operating System: GNU/Linux Ubuntu 22.04 LXC

- Location: Alcaston, Shropshire, UK

- Contact:

Re: Enhancements for correcting bad historical data and records

You can do it all through the interface screens now. Start with the log file editor. If you do the correction before rollover then you can skip the dayfile editor. Then use the records editors. You can load the values from the log files in the records editors, that's why it's best to do the logfile editor first. You shouldn't need to touch the actual files themselves.

You can also set "spike" values using the interface in order to filter out future crazy values.

You can also set "spike" values using the interface in order to filter out future crazy values.

-

Benpointer

- Posts: 30

- Joined: Sun 02 Jan 2011 6:44 pm

- Weather Station: Davis Vantage Pro2

- Operating System: OSX Sierra

Re: Enhancements for correcting bad historical data and records

What is the 'log file editor'?

In Cumulus MX all I see under Edit is the following:

My Vantage Pro2 has gone a bit haywire over the past 24 hours and is posting a continuous rain rate of 281.0mm per hour. I have temporarily set the rain multiplier to zero to stop it being recorded in Cumulus while I sort the VP2 issue out but I would like to strip out the erroneous data. It has been dry throughout the period of rogue readings, which helps - I just need to se the rain rate to zero throughout.

- PaulMy

- Posts: 3849

- Joined: Sun 28 Sep 2008 11:54 pm

- Weather Station: Davis VP2 Plus 24-Hour FARS

- Operating System: Windows8 and Windows10

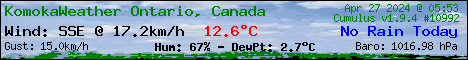

- Location: Komoka, ON Canada

- Contact:

Re: Enhancements for correcting bad historical data and records

HI,

From Dashboard => Data logs => Monthly logs > select and Load a period > select a line > Press Edit

Enjoy,

Paul

The Edit from within the Monthly Logs view.What is the 'log file editor'?

From Dashboard => Data logs => Monthly logs > select and Load a period > select a line > Press Edit

Enjoy,

Paul

VP2+

C1 www.komokaweather.com/komokaweather-ca

MX https://komokaweather.com/cumulusmx/index.htm /index.html /index.php

MX https://komokaweather.com/cumulusmxwll/index.htm /index.html /index.php

MX https:// komokaweather.com/cumulusmx4/index.htm

C1 www.komokaweather.com/komokaweather-ca

MX https://komokaweather.com/cumulusmx/index.htm /index.html /index.php

MX https://komokaweather.com/cumulusmxwll/index.htm /index.html /index.php

MX https:// komokaweather.com/cumulusmx4/index.htm

-

Benpointer

- Posts: 30

- Joined: Sun 02 Jan 2011 6:44 pm

- Weather Station: Davis Vantage Pro2

- Operating System: OSX Sierra